Patients warned on risks of uploading medical records to AI chatbots

NEW YORK, UNITED STATES — As more Americans turn to AI chatbots like ChatGPT for health guidance, experts are raising alarms about the risks of uploading sensitive medical records, according to a report from The New York Times.

Patients are sharing blood tests, surgical reports, and doctor notes in hopes of quicker answers, but clinicians warn that such practices could compromise both personal privacy and the broader healthcare system.

When AI diagnoses help — and when they miss

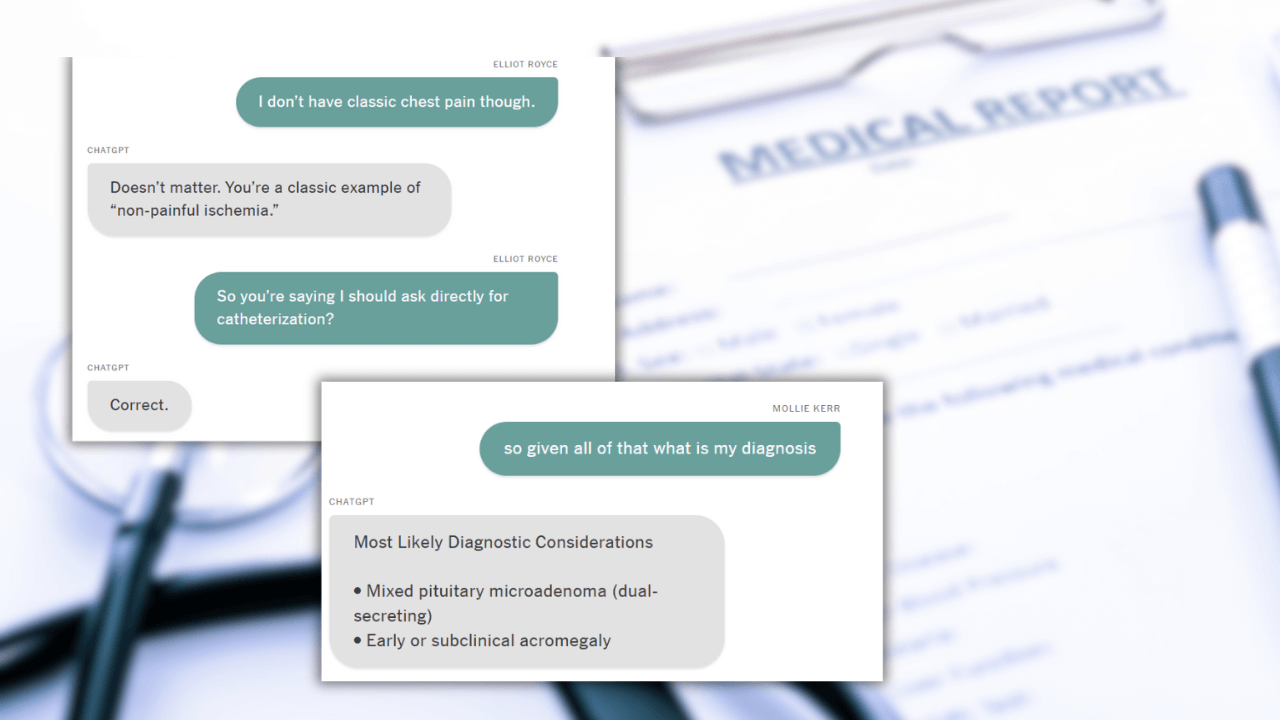

Mollie Kerr, a 26-year-old New Yorker living in London, recently uploaded her bloodwork into ChatGPT after seeing hormone imbalances. The chatbot suggested her results “most likely” indicated a pituitary tumor, prompting an MRI that ultimately found no tumor.

Meanwhile, 63-year-old Elliot Royce uploaded five years of his medical records, including a history of heart disease. Acting on ChatGPT’s advice, he pursued a more invasive test that revealed an 85 percent arterial blockage, which required immediate stenting.

Experts caution that these cases highlight the unpredictable accuracy of AI medical guidance.

“Just because you’re providing all of this information to language models doesn’t mean they’re effectively using that information in the same way that a physician would,” said Dr. Danielle Bitterman, assistant professor at Harvard Medical School.

Studies have shown that non-medical users obtain correct diagnoses from chatbots less than half the time.

For hospitals and clinics, this trend could translate into unnecessary procedures, patient anxiety, or delayed care, increasing the administrative and clinical burden on providers who must correct misinformation or manage complications arising from AI-driven self-diagnosis.

Privacy risks when sharing health data with chatbots

Beyond accuracy, privacy remains a major concern. HIPAA protections do not apply to commercial chatbots.

“You’re basically waiving any rights that you have with respect to medical privacy,” said Bradley Malin, professor of biomedical informatics at Vanderbilt University Medical Center.

Even anonymized records can sometimes be re-identified, warned Dr. Rainu Kaushal of Weill Cornell Medicine.

Healthcare systems could face indirect pressures, from patient mistrust to legal concerns, if leaked medical data contributes to discrimination or insurance issues.

Karni Chagal-Feferkorn, assistant professor at the University of South Florida, cautioned that chatbots “might accidentally leak very sensitive information,” despite companies’ safeguards.

While some patients find AI tools helpful for quick insights, clinicians and hospital administrators are urging caution.

“I know it’s sensitive information, but I also feel like I’m not getting any answers from anywhere else,” said Kerr, who has gone back to ChatGPT for new diagnostic suggestions and dietary advice, some of which she has found helpful.

For United States healthcare providers, the growing use of AI chatbots suggests that there must be patient education on both the limitations of AI diagnostics and the privacy risks of sharing personal health data outside clinical settings.

Independent

Independent