Microsoft AI team mistakenly leaks 38TB of data

WASHINGTON, UNITED STATES — Microsoft’s artificial intelligence (AI) research team exposed a massive 38 terabytes of sensitive corporate data in a major security lapse.

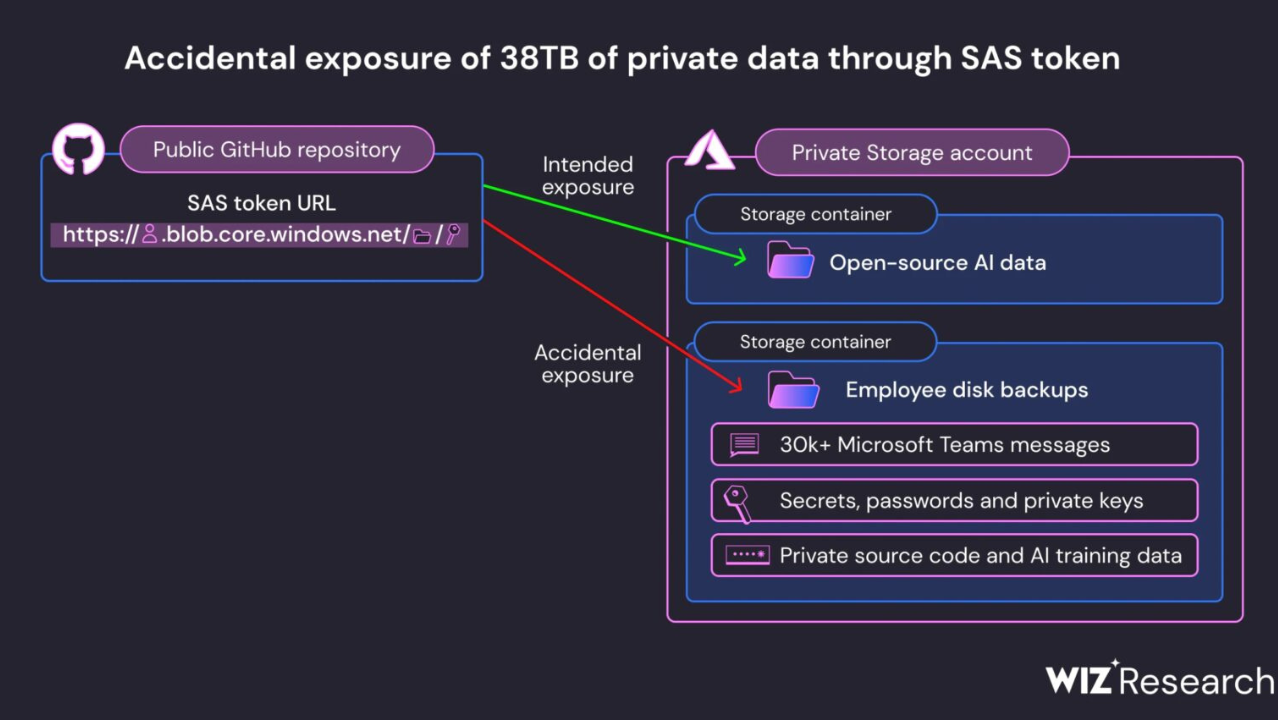

The data breach occurred through a misconfigured link on the company’s Azure cloud storage platform, jeopardizing passwords, encryption keys, internal messages, and other confidential information from over 350 employees.

The vulnerability was traced back to a storage bucket Microsoft’s AI team used to host open-source code and image recognition models. An improperly configured Azure Shared Access Signature token granted unrestricted access to the entire storage account instead of limiting data visibility.

Cloud security firm Wiz discovered the issue in June 2022 and reported it to Microsoft, which moved quickly to revoke the token and contain the breach.

Remarkably, the security hole has existed since 2020, exposing data for an extended period.

Wiz cautioned that these mistakes could become more common, saying, “This case is an example of the new risks organizations face when starting to leverage the power of AI more broadly, as more of their engineers now work with massive amounts of training data.”

“As data scientists and engineers race to bring new AI solutions to production, the massive amounts of data they handle require additional security checks and safeguards,” the group added.

After an investigation, Microsoft issued a statement concluding that no customer information was compromised and internal systems remained secure.

Independent

Independent