One-third of organizations using AI security tools — Gartner survey

CONNECTICUT, UNITED STATES — Thirty-four percent of organizations are already using artificial intelligence (AI) application security tools against risks from generative AI (GenAI) models, according to a new survey from Gartner, Inc.

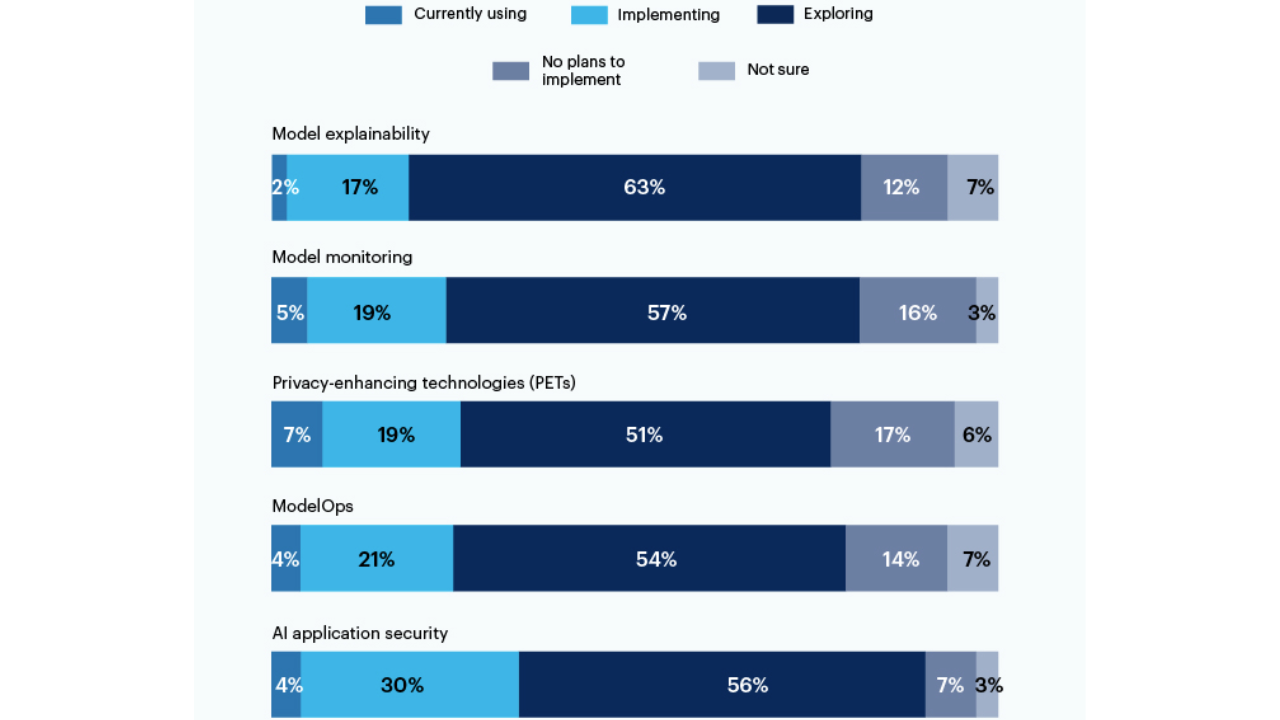

The survey of 150 IT and security leaders at companies using or exploring GenAI, conducted between April 1 to April 7, also found that 56% are also exploring such solutions. Top concerns were leaked secrets in AI-generated code (57%) and biased/incorrect outputs (58%).

“IT and security and risk management leaders must, in addition to implementing security tools, consider supporting an enterprise-wide strategy for AI TRiSM (trust, risk and security management),” said Avivah Litan, Distinguished VP Analyst at Gartner.

The survey showed 26% now use privacy-enhancing technologies, 25% use ModelOps, and 24% use model monitoring. But only 24% claim full ownership of AI security; 44% said IT has responsibility for GenAI security.

“Organizations that don’t manage AI risk will witness their models not performing as intended and, in the worst case, can cause human or property damage,” Litan added.

“This will result in security failures, financial and reputational loss, and harm to individuals from incorrect, manipulated, unethical or biased outcomes. AI malperformance can also cause organizations to make poor business decisions.”

Independent

Independent